ML+Health Seminar Series

We are hosting ML+Healthcare Seminar Series 2023 Fall!

Upcoming seminar:

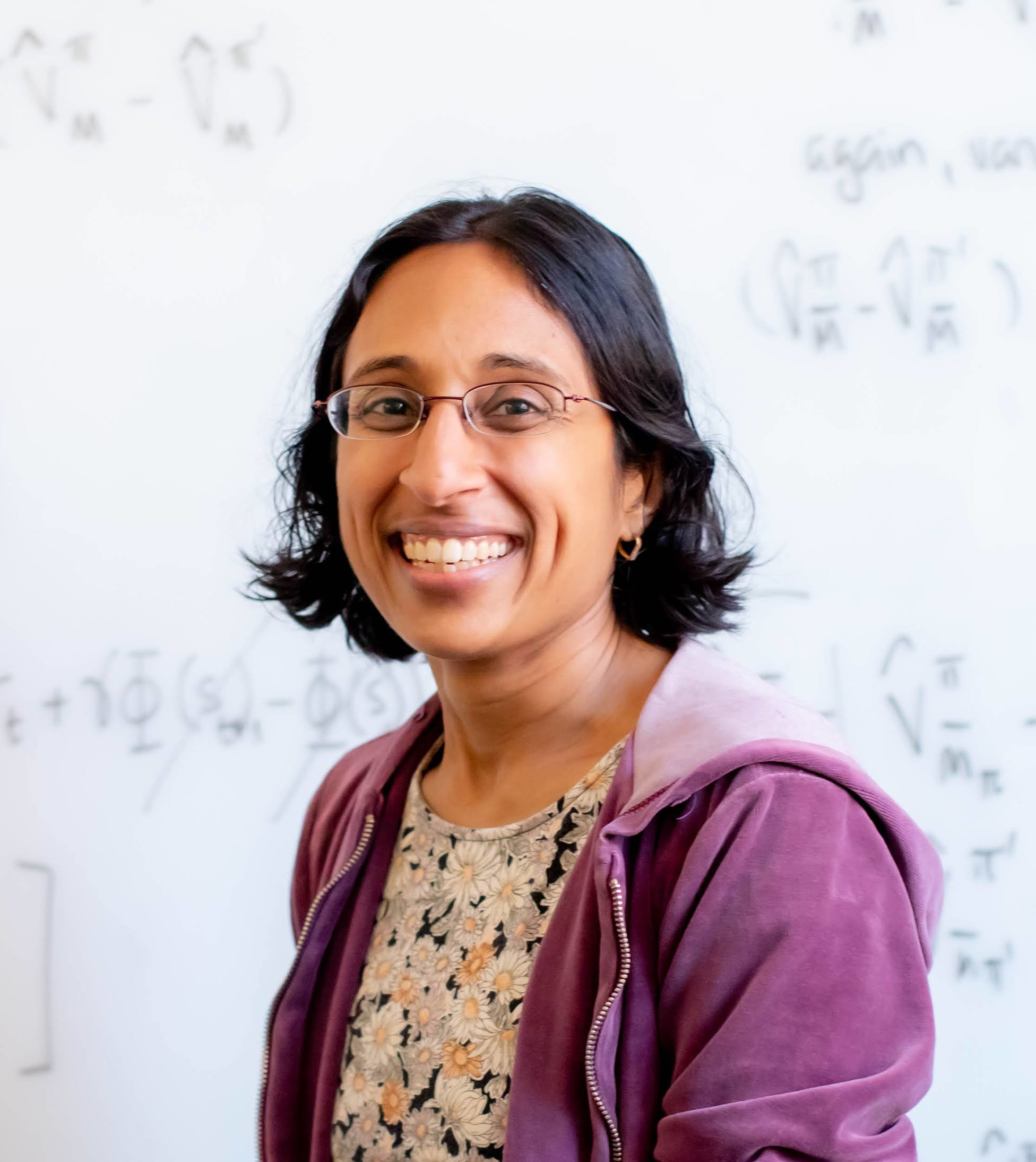

Decision making in healthcare settings: New methods to match the complexity of clinical data. - Barbara Engelhardt

Decision-making tasks in healthcare settings use methods that make a number of assumptions that we know are violated in clinical data. For example, clinicians do not always act optimally; clinicians are more or less aggressive in treating patients, and patients have (often unobserved) conditions that lead to differential response to interventions. In this talk, I will walk through a handful of these violated assumptions and discuss reinforcement learning methods we have created to address these violated assumptions. I will show on a number of scenarios, including sepsis treatment and electrolyte repletion, that these methods that have more flexible assumptions than existing methods lead to substantial improvements in decision-making tasks in clinical settings. November 30th, 2:30pm - 3:30pm Kiva

|

Barbara E Engelhardt is a Senior Investigator at Gladstone Institutes and Professor at Stanford University in the Department of Biomedical Data Science. She received her B.S. (Symbolic Systems) and M.S. (Computer Science) from Stanford University and her PhD from UC Berkeley (EECS) advised my Prof. Michael I Jordan. She was a postdoctoral fellow with Prof. Matthew Stephens at the University of Chicago. She was an Assistant Professor at Duke University from 2011-2014, and an Assistant, Associate, and then Full Professor at Princeton University in Computer Science from 2014-2022. She has worked at Jet Propulsion Labs, Google Research, 23andMe, and Genomics plc. In her career, she received an NSF GRFP, the Google Anita Borg Scholarship, the SMBE Walter M. Fitch Prize (2004), a Sloan Faculty Fellowship, an NSF CAREER, and the ISCB Overton Prize (2021). Her research is focused on developing and applying models for structured biomedical data that capture patterns in the data, predict results of interventions to the system, assist with decision-making support, and prioritize experiments for design and engineering of biological systems. |

Directions to Star Conference Room, 32-D463

The Star Conference room is on the 4th floor of the Dreyfoos Wing in the Stata Center (Building 32). Enter Building 32 at the front entrance and proceed straight ahead; there will be elevators to the right. Take the elevators to the 4th floor; exit to the left and then turn right at the end of the elevator bank. At the end of the short corridor, turn right, just before the R&D Dining room. The Star Conference Room is straight ahead, just past a set of stairs.

Directions to Patil/Kiva Seminar Room, 32-G449

The Patil/Kiva Seminar room is on the 4th floor of the Gates Tower in the Stata Center (Building 32). Enter Building 32 at the front entrance and proceed straight ahead; there will be elevators to the right. Take the elevators to the 4th floor; exit to the left and then turn right at the end of the elevator bank. At the end of the short corridor bear to the left and continue around the R&D Dining Room. CSAIL Headquarters will be to your left and the Patil/Kiva Seminar Room will be straight ahead.

Past Seminars:

Helping physicians make sense of medical evidence with Large Language Models - Byron Wallace

September 15th 3:30pm - 4:30pm, Star

Decisions about patient care should be supported by data. But much clinical evidence—from notes in electronic health records to published reports of clinical trials—is stored as unstructured text and so not readily accessible. The body of such unstructured evidence is vast and continues to grow at breakneck pace, overwhelming healthcare providers and ultimately limiting the extent to which patient care is informed by the totality of relevant data. NLP methods, particularly large language models (LLMs), offer a potential means of helping domain experts make better use of such data, and ultimately to improve patient care.

In this talk I will discuss recent and ongoing work on designing and evaluating LLMs as tools to assist physicians and other domain experts navigate and making sense of unstructured biomedical evidence. These efforts suggest the potential of LLMs as an interface to unstructured evidence. But they also highlight key challenges—not least of which is ensuring that LLM outputs are factually accurate and faithful to source material.

|

Byron Wallace is the Sy and Laurie Sternberg Interdisciplinary Associate Professor and Director of the BS in Data Science program at Northeastern University in the Khoury College of Computer Sciences. His research is primarily in natural language processing (NLP) methods, with an emphasis on their application in healthcare and the challenges inherent to this domain. |

Statistics When n Equals 1 - Ben Recht

September 29th 4:00pm - 5:00pm, Kiva

21st-century medicine embraces a statistical view of effectiveness. This view considers the implications of treatments and diseases as best understood on populations. But such population conclusions tell us little about what to do with any particular person. This talk will first describe some of the shortsightedness of population statistics when it comes to individual decision-making. As an alternative, I will outline how we might design treatments and interventions to help individuals directly. I will present a series of parallel projects that link ideas from optimization, control, and experiment design to create statistics and inform decisions for the individual. Though most recent work has focused on precision, focusing on smaller statistical populations, I will explain why optimization might better guide personalization.

|

Benjamin Recht is a Professor in the Department of Electrical Engineering and Computer Sciences at the University of California, Berkeley. His research has focused on applying mathematical optimization and statistics to problems in data analysis and machine learning. He is currently studying histories, methods, and theories of scientific validity and experimental design. |

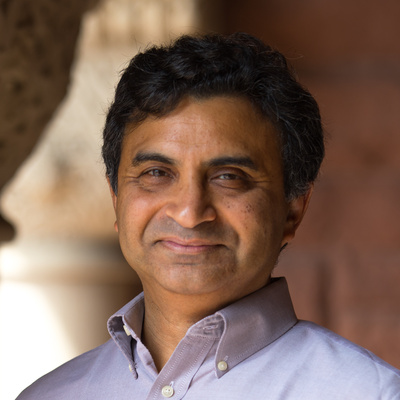

AI for social impact: Results from deployments for public health - Milind Tambe

October 13th 3:30pm - 4:30pm, Kiva

For the past more than 15 years, my team and I have been focused on AI for social impact, deploying end-to-end systems in areas of public health, conservation and public safety. In this talk, I will highlight the results from our deployments for public health, as well as the AI and multiagent systems innovations that were necessary. I will first present our work with the world’s two largest mobile health programs for maternal and child care that have served millions of beneficiaries. In one of these, our deployment significantly cut attrition and doubled health information exposure for those with least access. Additionally, I will highlight results from an earlier project on HIV prevention and others. The key challenge in all of these applications is one of optimizing limited intervention resources. To address this challenge, I will discuss advances in restless multi-armed bandits, decision-focused learning in predict-then-optimize systems, and influence maximization in social networks, and also discuss their broader applicability. In pushing this research agenda, our ultimate goal is to facilitate local communities and non-profits to directly benefit from advances in AI tools and techniques.

|

Milind Tambe is Gordon McKay Professor of Computer Science and Director of Center for Research in Computation and Society at Harvard University; concurrently, he is also Principal Scientist and Director for "AI for Social Good" at Google Research. He is recipient of the IJCAI John McCarthy Award, AAAI Feigenbaum Prize, AAAI Robert S. Engelmore Memorial Lecture Award, AAMAS ACM Autonomous Agents Research Award, INFORMS Wagner prize for excellence in Operations Research practice and MORS Rist Prize. He is a fellow of AAAI and ACM. For his work on AI and public safety, he has received Columbus Fellowship Foundation Homeland security award and commendations and certificates of appreciation from the US Coast Guard, the Federal Air Marshals Service and airport police at the city of Los Angeles. |

Towards Interpretable and Trustworthy RL for Healthcare - Finale Doshi-Velez

October 27th 12:30pm - 1:30pm, Star

Reinforcement learning has the potential to take into the many factors about a patient and identify a personalized treatment strategy that will lead to better long-term outcomes. In this talk, I will focus on the offline, or batch setting: we are giving a large amount prior clinical data, and from those interactions, our goal is to propose a better treatment policy. This setting is common in healthcare, where both safety and compliance concerns make it difficult to train a reinforcement learning agent online. However, when we cannot actually execute our proposed actions, we have to be extra careful that our reinforcement learning agent does not hallucinate bad actions as good ones.

Toward this goal, I will first discuss how the limitations of batch data can actually be a feature, when it comes to interpretability. I will share an offline RL algorithm that takes advantage of the fact that we can only make inference about alternative treatments when clinicians have tried many alternatives not only to produce policies that have higher confidence statistically but also are compact enough to inspect by human experts. Next, I will touch on questions of reward design and taking advantage of the fact that our batch of data was produced by experts. Can we expose to the clinicians what their behaviors seem to be optimizing? Can we identify situations in which what a clinician claims is their reward does not match their actions? Can we perform offline RL with all the great qualities above in a way that is robust to reward misspecification? That takes into account that clinicians are in general doing their best? Our work in these areas brings us closer to realizing the potential of RL in healthcare.

|

Finale Doshi-Velez is a Gordon McKay Professor in Computer Science at the Harvard Paulson School of Engineering and Applied Sciences. She completed her MSc from the University of Cambridge as a Marshall Scholar, her PhD from MIT, and her postdoc at Harvard Medical School. Her interests lie at the intersection of machine learning, healthcare, and interpretability. |

Generative AI for Clinical Trial Development - Jimeng Sun

October 31st, 2:30pm - 3:30pm Kiva

We present three recent papers on how Generative AI can help clinical trial development:

- TrialGPT: Matching Patients to Clinical Trials Recruiting the right patients quickly can be challenging in clinical trials. TrialGPT uses Large Language Models (LLMs) to predict a patient’s suitability for various trials based on their medical notes, making it easier to find the best match.

- AutoTrial: Improving Clinical Trial Eligibility Criteria Design Designing eligibility criteria for clinical trials can be complex. AutoTrial uses language models to streamline this process. It combines targeted generation, adapts to new information, and clearly explains its decisions.

- MediTab: Handling Diverse Clinical Trial Data Tables Medical data, such as clinical trial results, comes in various tables, making it hard to compare and combine. MediTab uses LLMs to merge different data tables and align unfamiliar data to ensure consistency and accuracy.

|

Dr. Sun is a Health Innovation Professor at the Computer Science Department and Carle Illinois College of Medicine at University of Illinois Urbana Champaign. Previously, he was an associate professor at Georgia Tech's College of Computing and co-directed the Center for Health Analytics and Informatics. Dr. Sun's research focuses on using artificial intelligence (AI) to improve healthcare. This includes deep learning for drug discovery, clinical trial optimization, computational phenotyping, clinical predictive modeling, treatment recommendation, and health monitoring. He has been recognized as one of the Top 100 AI Leaders in Drug Discovery and Advanced Healthcare. Dr. Sun has published over 300 papers with over 25,000 citations, and h-index 81. He collaborates with leading hospitals such as MGH, Beth Israel Deaconess, OSF healthcare, Northwestern, Sutter Health, Vanderbilt, Northwestern, Geisinger, and Emory, as well as the biomedical industry, including IQVIA, Medidata and multiple pharmaceutical companies. Dr. Sun earned his B.S. and M.Phil. in computer science at Hong Kong University of Science and Technology, and his Ph.D. in computer science at Carnegie Mellon University. |

15+ Years in the Making - When will the Digital Mental Health Revolution Deliver on its Promise? - Tanzeem Choudhury

The power of sensors, algorithms, and AI was supposed to fix much that is wrong with mental healthcare: poor measurements, robust tracking of response to treatments, and timely delivery of personalized interventions. Yet much of mental health care and care delivery today remains the same without leveraging the advances made by technology. In this talk, I will reflect on the progress, the gaps, and thoughts on making Digital Mental Health a success story.

November 2nd 2:30pm - 3:30pm, Kiva

|

Tanzeem Choudhury is a Professor of Computing and Information Sciences at Cornell Tech where she holds the Roger and Joelle Burnell Chair in Integrated Health and Technology. From 2021-2023, she served as the Senior Vice President of Digital Health at Optum Labs and is a co-founder of HealthRhythms Inc, a company whose mission is to add the layer of behavioral health into all of healthcare. At Cornell, she directs the People-Aware Computing group, which focuses on innovating the future of technology-assisted well-being. Tanzeem received her PhD from the Media Laboratory at MIT and her undergraduate degree in Electrical Engineering from University of Rochester. She has been awarded the MIT Technology Review TR35 award, NSF CAREER award, TED Fellowship, Kavli Fellowship, ACM Fellow, Ubicomp 10yr Impact Award (2016, 2022), and has been elected as an ACM Fellow and inducted into the ACM SIGCHI Academy. |